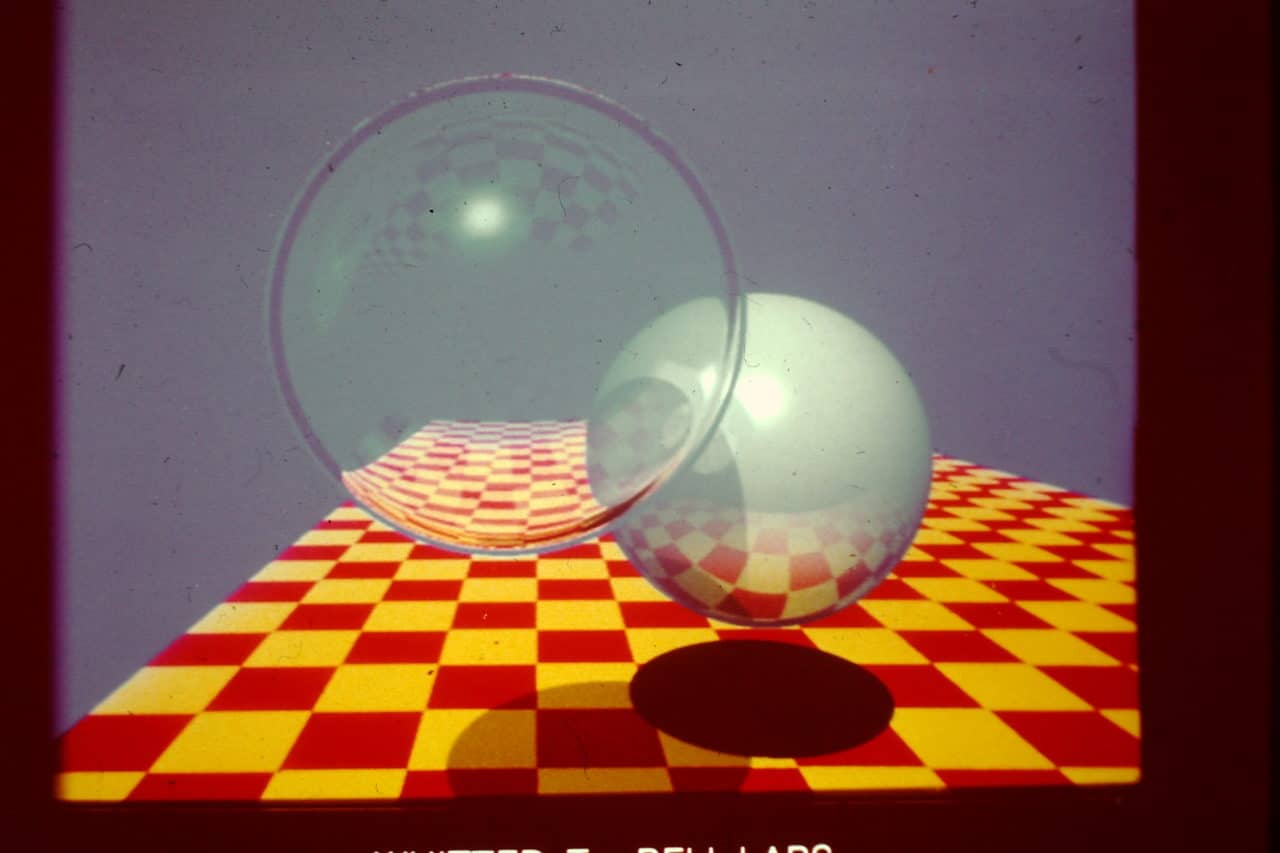

Yeah, the history of ray tracing is pretty crazy, and Peter Shirley is "the man." You want to learn ray tracing, you MUST have his books and his research articles. I'm not calling him a liar; I'm blaming myself for my poor coding hahaha! But he did that NVIDIA scene in 2006 and claimed 3-4 frames per second "in software" and I do believe him as he is "the ray tracing man." The dude pushes what can be done in software and it's super interesting, and it's what I wanted to experiment with back in 2016 before everything in my life started falling apart LMFAO! I have some catching up to do!

The concept of the ray tracing AABB tree is simple. Every triangle has a centroid and a min-max bounding box. You start with AABB of whole model and divide it into k bins along dominant (longest) axis. You associate each triangle with one of the k bins depending on if the centroid is in that bin. After binning you update the AABBs to encompass only the triangles within them. When you hit n triangles or less in a bin, that bin is a leaf node and you sort the index buffer (so you only need to store a pointer to the triangle data). If there are more than n triangles per bin you repeat the subdivision process.

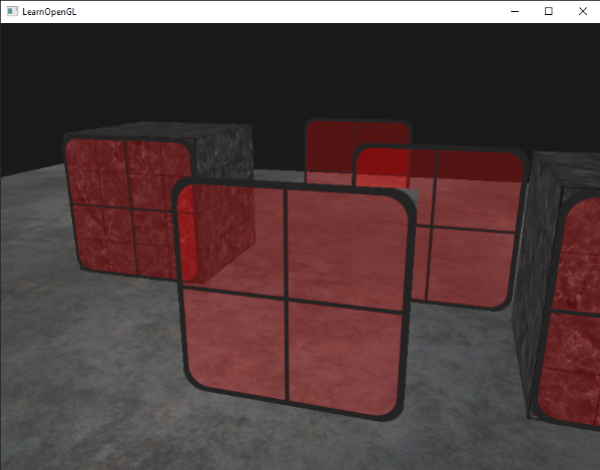

Also regarding those pics I posted... yes 4 minutes, but as you mentioned there are some caveats:

#1 = The models are low poly.

#2 = Over 50% of the scene is blank space and there is very little transparency. The AABB tree is very fast when there are large sections of empty space. Most of the time spent ray tracing is spent on ray-triangle intersection tests. Put that model in a single room covering the whole rendering area and yeah, time will nearly double.

There is a great journal article on this by the guy that ray traced Quake III, where he did a heat map for time-taken-per-pixel; it's an interesting read. Transparency, especially things like trees that were far away, destroyed his frame rate.

#3 = Only 4 samples per pixel. The pictures I posted look like shit. Lots of artifacts and aliasing along edges. Sampling kills. The guys that ray traced Quake III only used 1, 2, 4, and 8 samples per pixel. I don't even want to think what these models would look like if I placed them far away LMAO! Look at

https://dl.acm.org/doi/fullHtml/10.1145/3447807. They are using minimum 100 samples per pixel and it takes 30 seconds to render a scene (though they are doing GPU accel). At 100 samples per pixels, notice that some of their pictures look like CRAP! So yeah, if I used 100 samples per pixel you are indeed looking at an hour! But with game models, do you really need 100 samples per pixel, or even 1,000? Do we really need Blender Cycles quality?

#4 = Also, remember XNALara and XPS only has up to three directional lights. No fancy lighting here. I think those pics I posted used only one of those directional lights, the default one. My source notes have:

Code: Select all

#pragma region LIGHTS

// default XNALara/XPS light

// angle horizontal = 315 (0 = shadow to right, 90 = shadow to front, 180 = shadow to left, 360 = shadow to right)

// angle vertical = 35 (-90, light on bottom, 0 = no shadow, 90 = light on top)

// intensity = 1.1

// shadow depth = 0.4

// <horz, vert>

// this rotates about z-axis, changing vert

// <0, 90> = <0, -1, 0> // light above

// <0, 0> = <-1, 0, 0> // light on side

// <0, -90> = <0, 1, 0> // light below

struct directional_light {

bool enabled;

real32 angle_horz;

real32 angle_vert;

vector3D direction;

vector4D<real32> color;

real32 intensity;

real32 shadow_depth;

};

static directional_light lightlist[3];

void ComputeLightDirection(vector3D& D, real32 horz, real32 vert)

{

real32 r1 = cs::math::radians(horz);

real32 r2 = cs::math::radians(vert);

real32 m[16];

cs::math::rotation_matrix4x4_YZ(m, r1, r2); // order is Z then Y

real32 v[3] = { 1.0f, 0.0f, 0.0f };

real32 x[3];

cs::math::matrix4x4_mul_vector3D(x, m, v);

cs::math::vector3D_normalize(x);

D.x = -x[0];

D.y = -x[1];

D.z = -x[2];

}

void InitLights(void)

{

#define L1_DEFAULT lightlist[0].angle_horz = 315.0f; \

lightlist[0].angle_vert = 35.0f;

#define L1_FRONT lightlist[0].angle_horz = 270.0f; \

lightlist[0].angle_vert = 0.0f;

// light #1

L1_DEFAULT;

lightlist[0].enabled = true;

// lightlist[1].angle_horz = 0.0f;

// lightlist[1].angle_vert = 0.0f;

lightlist[0].color.x = lightlist[0].color.y = lightlist[0].color.z = lightlist[0].color.w = 1.0f;

lightlist[0].intensity = 1.1f;

lightlist[0].shadow_depth = 0.4f;

ComputeLightDirection(lightlist[0].direction, lightlist[0].angle_horz, lightlist[0].angle_vert);

std::cout << "dir = <" << lightlist[0].direction.x << "," << lightlist[0].direction.y << "," << lightlist[0].direction.z << ">" << std::endl;

// light #2

lightlist[1].enabled = false;

lightlist[1].angle_horz = 225.0f;

lightlist[1].angle_vert = 35.0f;

lightlist[1].color.x = lightlist[0].color.y = lightlist[0].color.z = lightlist[0].color.w = 1.0f;

lightlist[1].intensity = 0.0f;

lightlist[1].shadow_depth = 0.4f;

ComputeLightDirection(lightlist[1].direction, lightlist[1].angle_horz, lightlist[1].angle_vert);

// light #3

lightlist[2].enabled = false;

lightlist[2].angle_horz = 180.0f;

lightlist[2].angle_vert = 90.0f;

lightlist[2].color.x = lightlist[0].color.y = lightlist[0].color.z = lightlist[0].color.w = 1.0f;

lightlist[2].intensity = 0.0f;

lightlist[2].shadow_depth = 0.4f;

ComputeLightDirection(lightlist[2].direction, lightlist[2].angle_horz, lightlist[2].angle_vert);

}

#pragma endregion

My render group code is not very efficient, but it's there:

Code: Select all

vector4D<unsigned char> RG27(const XNAMesh& mesh, uint32 face_index, const cs::math::ray3D<real32>& ray, float u, float v, float w)

{

using cs::binary32;

using cs::math::vector2D;

using cs::math::vector3D;

// triangle vertices

const XNAVertex& v1 = mesh.verts[mesh.faces[face_index].refs[0]];

const XNAVertex& v2 = mesh.verts[mesh.faces[face_index].refs[1]];

const XNAVertex& v3 = mesh.verts[mesh.faces[face_index].refs[2]];

// face UVs

cs::math::vector2D<real32> uv1(v1.uv[0]);

cs::math::vector2D<real32> uv2(v2.uv[0]);

cs::math::vector2D<real32> uv3(v3.uv[0]);

// face UVs (edges)

cs::math::vector2D<real32> te1 = uv2 - uv1;

cs::math::vector2D<real32> te2 = uv3 - uv1;

// you can't bump map degenerate UVs

real32 scale = (te1[0]*te2[1] - te2[0]*te1[1]);

//

// iterpolated UVs

//

// interpolated UV coordinates

cs::binary32 uv[2] = {

u*v1.uv[0][0] + v*v2.uv[0][0] + w*v3.uv[0][0],

u*v1.uv[0][1] + v*v2.uv[0][1] + w*v3.uv[0][1]

};

// diffuse sample

auto DM_sample = SampleTexture(mesh.textures[0], uv[0], uv[1]);

//

// NORMAL MAPPING

//

binary32 N[3];

binary32 T[4];

binary32 B[3];

// interpolated normal

N[0] = u*v1.normal[0] + v*v2.normal[0] + w*v3.normal[0];

N[1] = u*v1.normal[1] + v*v2.normal[1] + w*v3.normal[1];

N[2] = u*v1.normal[2] + v*v2.normal[2] + w*v3.normal[2];

cs::math::vector3D_normalize(N);

if(enable_NM && !(std::abs(scale) < 1.0e-6f))

{

// interpolated tangent

T[0] = u*v1.tangent[0][0] + v*v2.tangent[0][0] + w*v3.tangent[0][0];

T[1] = u*v1.tangent[0][1] + v*v2.tangent[0][1] + w*v3.tangent[0][1];

T[2] = u*v1.tangent[0][2] + v*v2.tangent[0][2] + w*v3.tangent[0][2];

T[3] = u*v1.tangent[0][3] + v*v2.tangent[0][3] + w*v3.tangent[0][3];

cs::math::vector3D_normalize(T);

// N = T cross B (Z = X x Y)

// T = B cross N (X = Y x Z)

// B = N cross T (Y = Z x X)

cs::math::vector3D_vector_product(B, N, T);

B[0] *= T[3]; // for mirrored UVs

B[1] *= T[3]; // for mirrored UVs

B[2] *= T[3]; // for mirrored UVs

cs::math::vector3D_normalize(B);

// remap sample to [-1, +1]

auto NM_sample = SampleTexture(mesh.textures[1], uv[0], uv[1]);

real32 N_unperturbed[3] = {

NM_sample.x*2.0f - 1.0f,

NM_sample.y*2.0f - 1.0f,

NM_sample.z*2.0f - 1.0f

};

cs::math::vector3D_normalize(N_unperturbed);

// rotate normal

N[0] = N_unperturbed[0]*N[0] + N_unperturbed[1]*B[0] + N_unperturbed[2]*T[0];

N[1] = N_unperturbed[0]*N[1] + N_unperturbed[1]*B[1] + N_unperturbed[2]*T[1];

N[2] = N_unperturbed[0]*N[2] + N_unperturbed[1]*B[2] + N_unperturbed[2]*T[2];

cs::math::vector3D_normalize(N);

}

//

// ENVIRONMENT MAPPING

//

// matrix transform

float R[16] = {

-cam_L[0], -cam_L[1], -cam_L[2], 0.0f, // map -L to X

cam_U[0], cam_U[1], cam_U[2], 0.0f, // map +U to Y

-cam_D[0], -cam_D[1], -cam_D[2], 0.0f, // map -D to Z

0.0f, 0.0f, 0.0f, 1.0f

};

// veiw vector and normal in sphere map space

float v_p[3];

float n_p[3];

cs::math::matrix4x4_mul_vector3D(v_p, R, cam_D);

cs::math::matrix4x4_mul_vector3D(n_p, R, N);

cs::math::vector3D_normalize(v_p);

cs::math::vector3D_normalize(n_p);

// compute reflection vector in sphere map space

float dot2 = 2.0f*(n_p[0]*v_p[0] + n_p[1]*v_p[1] + n_p[2]*v_p[2]);

float rx = v_p[0] - dot2 * n_p[0]; // use original view direction for sphere map

float ry = v_p[1] - dot2 * n_p[1]; // use original view direction for sphere map

float rz = v_p[2] - dot2 * n_p[2]; // use original view direction for sphere map

// sphere map sample

real32 m = 2.0f*std::sqrt(rx*rx + ry*ry + (rz + 1)*(rz + 1));

real32 intpart;

real32 tu = modff((rx/m + 0.5f), &intpart);

real32 tv = modff((ry/m + 0.5f), &intpart);

auto SEM_sample = SampleTexture(mesh.textures[2], tu, tv);

// mix diffuse and environment map

real32 w2 = mesh.params.params[0];

real32 w1 = 1.0f - w2;

DM_sample.x = Saturate(DM_sample.x*w1 + SEM_sample.x*w2);

DM_sample.y = Saturate(DM_sample.y*w1 + SEM_sample.y*w2);

DM_sample.z = Saturate(DM_sample.z*w1 + SEM_sample.z*w2);

//

// LIGHTING

//

real32 wo[3] = {

-ray.direction[0],

-ray.direction[1],

-ray.direction[2],

};

vector3D<real32> L;

L[0] = 0.0f;

L[1] = 0.0f;

L[2] = 0.0f;

// factors

binary32 kd = (k_conservation ? 1.0f - ka : 1.0f);

// for each light

for(uint32 i = 0; i < 3; i++)

{

// light disabled

if(!lightlist[i].enabled) continue;

// wi is normalized vector "from hit point to light"

vector3D<real32> wi;

wi[0] = -lightlist[i].direction.x;

wi[1] = -lightlist[i].direction.y;

wi[2] = -lightlist[i].direction.z;

// if light intensity is not zero

binary32 dot = cs::math::vector3D_scalar_product(N, &wi[0]);

if(dot > 0.0f) {

L[0] += kd*DM_sample.x*lightlist[i].intensity*lightlist[i].color.x*dot;

L[1] += kd*DM_sample.y*lightlist[i].intensity*lightlist[i].color.y*dot;

L[2] += kd*DM_sample.z*lightlist[i].intensity*lightlist[i].color.z*dot;

}

}

// ambient + all light contributions

DM_sample.x = Saturate(DM_sample.x*(ka*ambient_co[0]) + Saturate(L[0]));

DM_sample.y = Saturate(DM_sample.y*(ka*ambient_co[1]) + Saturate(L[1]));

DM_sample.z = Saturate(DM_sample.z*(ka*ambient_co[2]) + Saturate(L[2]));

// final value

vector4D<uint08> retval;

retval.x = static_cast<uint08>(255.0f*DM_sample.x);

retval.y = static_cast<uint08>(255.0f*DM_sample.y);

retval.z = static_cast<uint08>(255.0f*DM_sample.z);

retval.w = static_cast<uint08>(255.0f*DM_sample.w);

return retval;

}